Jan 24, 2024

How Generative AI will power fraud in 2024, and what you can do about it

The Fourthline Team

Whether it's the potential for “superhuman persuasion” or removing the need for anyone to work again, AI continues to capture the imagination and concern of entrepreneurs, the press, and regulators across the world.

But for many banks and fintechs, there is a much more urgent risk posed by the rapid adoption of Generative AI: the potential for it to be used for fraud. This is a serious, time sensitive challenge. And this new wave of fraud is already upon us. A recent survey shows that 68% of consumers noticed an increase in the frequency of spam and scams from November 2022, around when Generative AI tools began to be adopted at scale. And driven by sophisticated AI attacks, global losses from online payment fraud are projected to jump from $38 billion in 2023 to $91 billion in 2028.

With Generative AI (GenAI), criminals have a weapon of mass destruction, which is set to power up over 2024. In this post, we will explore some of the ways it is (already) being leveraged for increasingly sophisticated fraud attempts. We’ll look at how it is evolving and what you can do to get ahead of it.

GenAI is accelerating fraud in two key ways: sophistication and scale

In terms of business, the possibilities for AI are almost endless. But legitimate businesses are limited by regulations, security considerations, the speed at which they can move, the need for a business case, and other factors. Meanwhile, criminals leveraging AI for fraud don’t have these constraints. And the ways that the technology is already being used by bad actors are not only highly innovative but have the potential to do a lot of damage.

Some examples GenAI-powered fraud include:

AI bots that scrape personal information from sources such as social media platforms and online databases and create convincing fake profiles.

Both well-known, public, and respected GenAI tools such as ChatGPT, and dark web ChatGPT-like “products” such as WormGPT and FraudGPT, are being leveraged to create things such as convincing phishing emails, cracking tools, and carding (a type of credit card fraud). Related is password spraying, where AI generates lists of most-used passwords in a certain industry and criminals then test these across accounts in a targeted organization.

Deep fake videos and voice and sophisticated fake IDs made by machine learning are being used for social engineering attempts or to beat authentication systems during customer onboarding or anti-money laundering (AML) checks.

This is just a small subset of the rapidly evolving and expanding ways in which GenAI is being used for fraud. And it is only the beginning. The ways it can be utilized to beat authentication checks, create synthetic identities, and take over accounts, among other things, is set to rise exponentially. Over 2024, these attacks will scale and become more sophisticated, putting your systems at risk.

How to combat Generative AI fraud

Nonetheless, there are a number of straightforward steps you can take to mitigate the risk already.

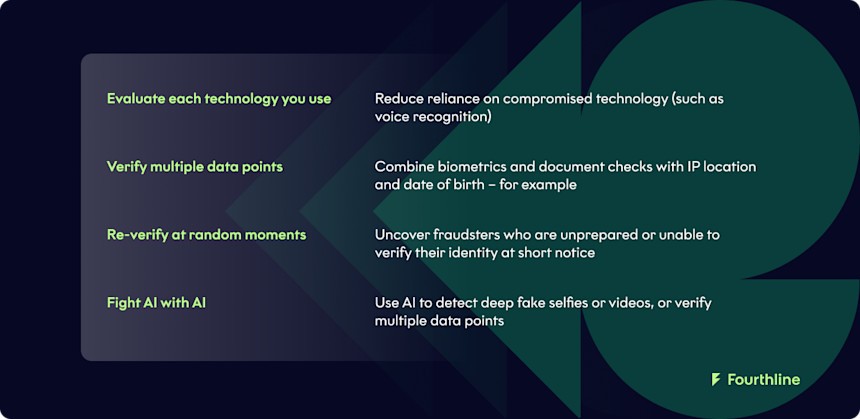

1.Evaluate each technology you use

GenAI tools such as those mentioned above mean that some authentication technologies are seriously compromised. A case in point; voice recognition. This doesn’t mean that you stop using said technologies completely. But you should consider reducing their emphasis and avoid relying on them too heavily during customer authentication.

2.Verify multiple data points

While criminals are now much more capable of creating convincing deep fake video or voice to beat verification checks with one or two data points, they are not often capable of beating verification of multiple data points. The more data points they have to fake, the more difficult it becomes for them.

Therefore, instead of collecting a couple of data points on a new customer or verifying suspicious activity with the same checks, you should consider how to verify your customer with multiple data points. These might include biometric and document checks combined with IP location and date of birth. This will automatically make your KYC or AML flows much more difficult to beat.

3.Re-verify at random moments

Likewise, fraudsters can be good at faking some data points to get through a predetermined check, but are not so good at passing surprise checks. Verifying the identity of an account holder or customer at unexpected moments can help uncover fraudsters who are unprepared or unable to verify their identity at short notice.

A word of caution: while verifying customers at random moments can help beat fraud, it can also cause annoyance amongst legitimate customers. So, making your verification flow seamless and fast is critical.

4.Fight AI with AI

And finally, rather than taking on the impossible task of staying up-to-date with the latest GenAI-based innovations in fraud, you can make use of AI solutions. These can be used to detect deep fake selfies or videos, or verify multiple data points at onboarding and during random security checks.

In fact, the reality is that leveraging AI is necessary to match the speed, scale, and complexity of fraudulent activities. And if you aren’t already doing so now, you will need to in the near future. Discover Fourthline’s approach to AI >

Fourthline: your partner to combat Generative AI fraud in 2024

If you take a look at the list of ways to combat Gen-AI powered fraud above, Fourthline has a strong proposition for each of them.

1.Verify eight data points with 200+ checks

In the customer onboarding process, Fourthline already collects and verifies eight data points, including Name, Age, Gender, DOB, Location/Address, Nationality, and Place of Birth. Fourthline also performs over 200 checks on identity verification, checking for authenticity, security features, liveness, and more. For example, when checking official IDs, these include constantly changing, difficult to duplicate data points such as fonts, spacing, and unique design features, among others.

While a criminal can fake some data points, it is extremely unlikely they would be able to fake eight of them, plus stand up against hundreds of checks.

2.Re-verify seamlessly at chosen moments

Verifying the identity of an existing account holder can be done seamlessly through Fourthline. The customer submits a biometric check, while Fourthline checks a range of phone metadata. This whole process takes a matter of a minute or two, meaning minimum disruption for your customer.

3.Fight deep fakes and other GenAI fraud

Fourthline’s proprietary AI technology is able to stop all kinds of biometric fraud, including stolen or AI-generated photos or videos, silicon masks, and more.

4.Partner with a provider that is constantly innovating

And finally, building fraud-proof solutions for fintechs and banks at scale is our core business. We are constantly innovating and updating our technologies to ensure they stay ahead of any kinds of trends, Gen-AI or other.

Real-life example of Fourthline stopping GenAI-powered fraud

In a recent case, Fourthline noticed that a number of accounts being opened across Europe were being accessed from a wildly different location to the one they were purportedly in. Even more suspiciously, though this was happening in different European countries, many were being accessed from a single location in Benin, west Africa. This highlights two things: the importance of verifying data points that are independent of one another, and the importance of re-verifying your customers at random moments.

The time to develop a new defense strategy is now

Superhuman persuasion may or may not arrive in the coming year, or even at all. And the end of work is even less likely. Nonetheless, GenAI poses a serious fraud threat. In fact, for all its potential for productivity, AI will also cause massive “gains” in cyber-attacks, scams, fraud, and data breaches, and having a strategy in place now is going to save pain over 2024 and beyond.

To find out more about how you can defend your systems from GenAI-powered fraud, get in touch.