Oct 16, 2020

The Basics of Using AI for ID Verification

Konstantinos Leventis - AI R&D Data Scientist

Fourthline’s KYC platform helps banks, fintechs, insurance brokers and more onboard customers and catch fraud at industry-leading conversion and fraud-detection rates. With 210 data-point checks applied to every identity processed, 95 percent of which are automated, artificial intelligence (AI) plays a key role in Fourthline’s approach to KYC. Through the sequential use of proprietary AI algorithms, Fourthline maximizes accuracy while trimming minutes off ID processing times—without sacrificing security.

Building a solution with advanced image processing

A central part of KYC is, of course, identity verification. As part of our onboarding flow, we require users to submit images of their identity documents for rigorous assessment and risk-scoring. But, before drawing any conclusions, our machines are tasked with detecting, recognizing, and reading the photographic input.

To build an effective and scalable solution, we needed to create a set of algorithms that could treat thousands of different document types in a consistent and standardized way. We knew that across document types, certain foundational steps would remain the same: we would require front-facing and angled images of a user’s document, alongside manual input of the document information. While our onboarding flow guides users through taking these pictures, we needed to account for the extreme degrees of domain variance that are inherent to this type of data collection.

Ultimately, we built a chain of algorithms that detects, adjusts, classifies, and reads each document image. Then based on the data, security features, and consistency checks, we draw conclusions that contribute to the authentication of a user’s submission.

Document detection & recognition

First, the user is asked to submit a front-facing image of their ID document. Our Document Detection algorithm then identifies the document in the image.

From there, the algorithm generates coordinates of the borders of the document, which connect to form what we call a “bounding box”. We use these coordinates to crop and zoom in on the document in question, preserving all areas of the document that would have useful information or security features.

We then use the cropped image generated by our Document Detector to identify the model of the document (origin country, document type). Once we’ve recognized it, we can start processing and assessing the information within the image.

Visual Inspection Zone detection & reading

Our next task is to collect and analyze the information within the document we just recognized. Thanks to the high volume of identities Fourthline processes, our algorithms accommodate over 3500 global document types. Naturally, each of these documents has its own specifications and security features, starting with where information is positioned on the document.

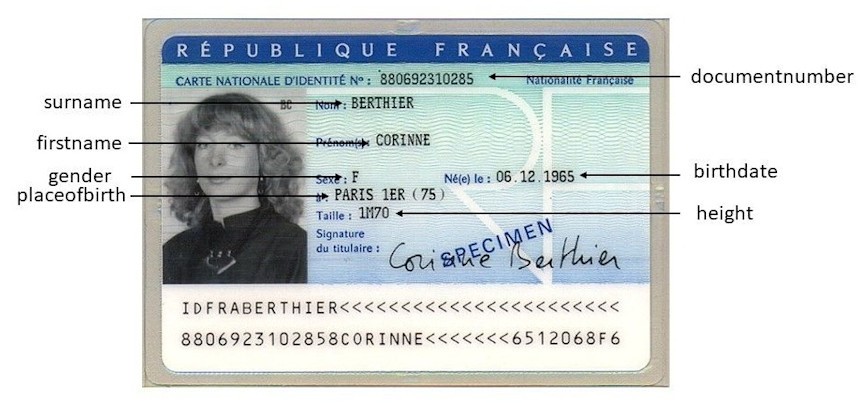

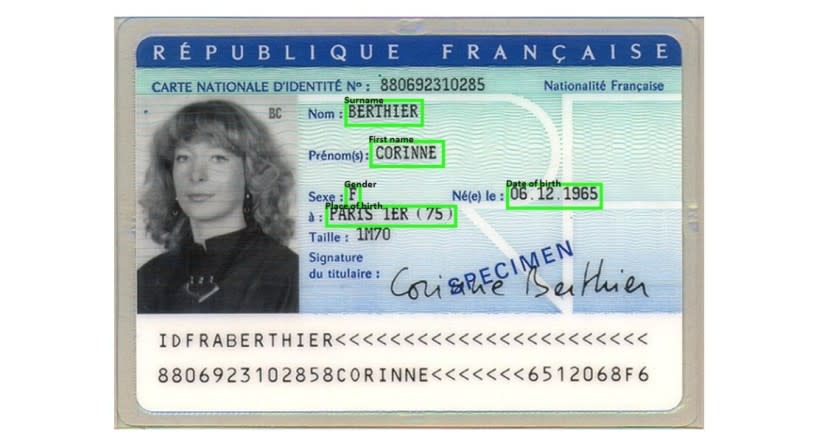

When building our Visual Inspection Zone (VIZ) algorithms, we defined VIZ fields per document type. This means that once a document has been cropped and recognized, our VIZ Detector will map out coordinates upon the image that dictate where to find the information we need to assess the validity of the document.

Naturally, the locations of different information fields vary per document. For instance, a French ID card will display a person’s birthday in the middle-right area of the card’s front-side, whereas an American passport will show the same information on the middle-left.

With the VIZ fields mapped, the algorithm can crop and zoom on each field to extract the information we need. The same mechanism of “bounding boxes” used in our Document Detector is applied to each VIZ field in the document.

Then, our VIZ Reader is tasked with reading these fields. Once the information has been read, we can apply certain criteria and generate conclusions about the user’s identity and personal details.

For instance, one of the basic checks we run verifies whether a user is of age to be accepted. This requires detecting where the birth date is on a document, reading the date, comparing this with today’s date, and finally calculating whether the person is at least 18 years old (or whatever age is required).

Another application of the VIZ reading service is the comparison of a user’s manual input to the automated reading results. This not only allows us to weed out inconsistent data entry, but also flags errors the algorithm may have made—educating our Optical Character Recognition (OCR) to run better in the future.

Delivering Better User Experiences

Ultimately, our AI serves to process identifications more accurately, at the fastest speed possible. Through the application of this technology, we’ve reduced our end-to-end KYC processing time to just 90 seconds at 99.98% fraud-detection accuracy, and that time will continue to decrease as we expand our coverage of different documents. As users demand smoother onboarding processes, reducing processing time becomes ever more critical to preventing drop off and delivering a streamlined customer experience.